MENLO PARK, Calif., Jan. 30, 2024 – Today, Zinn Labs is introducing the industry’s first event-based gaze-tracking system for VR/MR headsets and smart frames. The core enabling feature of event-based sensing is the faster and more efficient capture of the relevant signals for eye tracking.

Kevin Boyle, CEO of Zinn Labs, explains, “Zinn Labs’ event-based gaze-tracking reduces bandwidth by two orders of magnitude compared to video-based solutions, allowing it to scale to previously impractical applications and form factors.” The low compute footprint of Zinn Labs’ 3D gaze estimation gives it the flexibility to support low-power modes for use in smart wearables that look like normal eyewear. In other configurations, the gaze tracker can enable low-latency, slippage-robust tracking at speeds above 120 Hz for high-performance applications.

Gaze-tracking Applications

Smart eyewear is a rapidly emerging category that can serve as an always-there portal to access generative AI. The attention signal provided by gaze seamlessly connects AI output to the user’s intentions in a variety of application demos provided by Zinn Labs.

As AR and MR computing devices move toward increasingly more intuitive user interfaces, gaze tracking is a prerequisite for several component technologies. For example, gaze is the most natural method of interacting with the digital environment. Zinn Labs leverages the Prophesee GenX320 sensor to offer a high-refresh-rate and low-latency gaze-tracking solution that makes an attractive solution for those looking to push the boundaries of responsiveness and realism of head-worn display devices.

Zinn Labs DK1 Available Soon

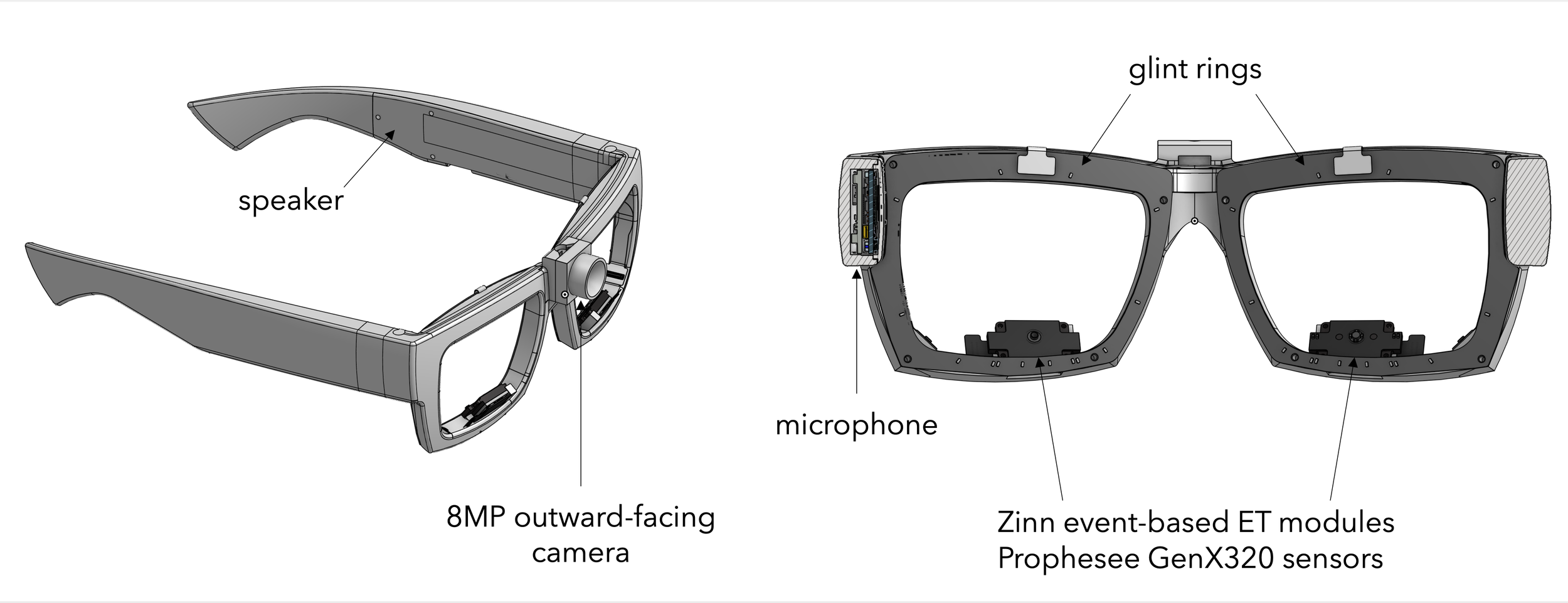

The Zinn Labs DK1 Gaze-enabled Eyewear Platform is a development kit equipped with a binocular event-based gaze-tracking system and outward-facing world camera and is powered by Zinn Labs’ 3D gaze estimation algorithms. The DK1 is equipped with Prophesee GenX320 event-based Metavision® sensors, the smallest event-based sensors on the market.

“Frame-based acquisition techniques don’t meet the speed, low latency, size and power requirements of AR/MR wearables and headsets that are practical to use and provide a realistic user experience. Our GenX320 was developed specifically with these real-time and processing efficiency needs in mind, delivering the advantages of event-based vision to a wide range of products, such as those that will be enabled by Zinn Labs’ breakthrough AR/MR platform,” said Luca Verre, CEO and co-founder of Prophesee.

The DK1 supports sub-1°, slippage-robust gaze tracking accuracy and runs at 120 Hz with 10 ms end-to-end latency. The development kit comes with demo applications, such as the wearable AI assistant featured here, and can be used for performance evaluation or customer proof-of-concept integration projects.

Learn more at SPIE AR | VR | MR 2024

Zinn Labs will be presenting the technology and demonstrating the DK1 at SPIE AR | VR | MR 2024 on January 30th and 31st in San Francisco, CA. Check out www.zinnlabs.com for more information or contact [email protected] to inquire about development kits and licensing.

About Zinn Labs

Zinn Labs, Inc. designs pupil and gaze tracking systems for head-worn devices, as well as novel user interfaces that only gaze can enable. Zinn Labs’ technologies combine cutting-edge computer vision research with a deep understanding of the human visual system and how user attention, captured through gaze, can bring a vision-first neural interface to a new generation of consumer devices.

If you found this article to be informative, you can explore more current Digital Twin news here exclusives, interviews, and podcasts.